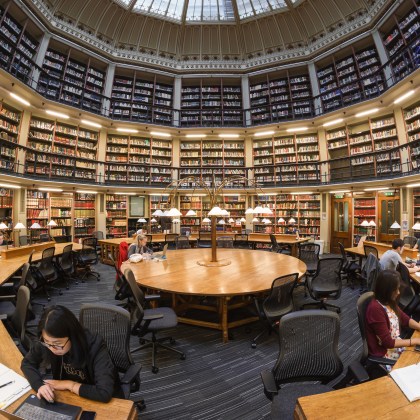

Google Code-In 2019: The next generation of technologists contribute to Wikimedia’s code

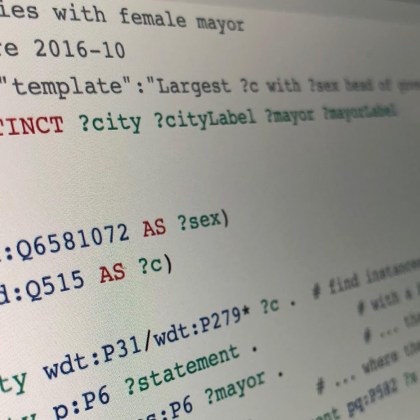

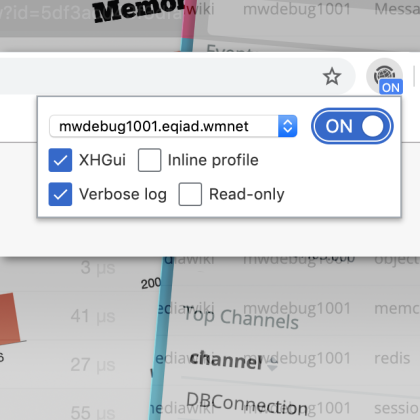

Each year the Google Code-in contest brings students and mentors from around the world together to improve their technical skills and to make contributions to the Wikimedia movement.